Generative AI and Wikipedia editing: What we learned in 2025

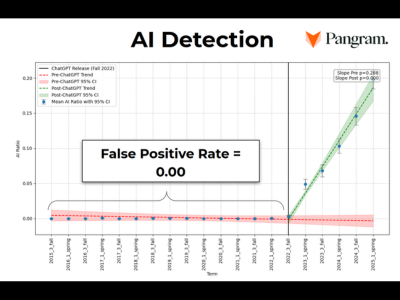

Wiki Education looked at how often participants in its Wikipedia editing programs were using generative AI, and what kinds of failures it introduces. Using the Pangram detector, they flagged 178 out of 3,078 new articles created through their work since 2022. Surprisingly, outright hallucinated citations were relatively rare in that set—but the more dangerous pattern was “plausible text + real citation” where the cited source didn’t actually support the claim.

That verification gap was bad enough that the organization spent significant staff time cleaning up: moving work back to sandboxes, stubbing articles that were mostly unverifiable, and recommending deletion where content was beyond repair. Going forward, they give one blunt rule: don’t copy/paste chatbot prose into Wikipedia. Instead, they’re pushing early interventions (near-real-time detection, staff tickets, training modules, and automated emails) to steer newcomers away from drafting text with LLMs before it hits mainspace.

They also outline a narrower set of “safer” uses—gap finding, source discovery, and research brainstorming—where humans still have to verify and write. The practical takeaway for editors is to treat fluency as a liability: if you can’t point to the exact sentence in the source, it doesn’t belong in the encyclopedia.