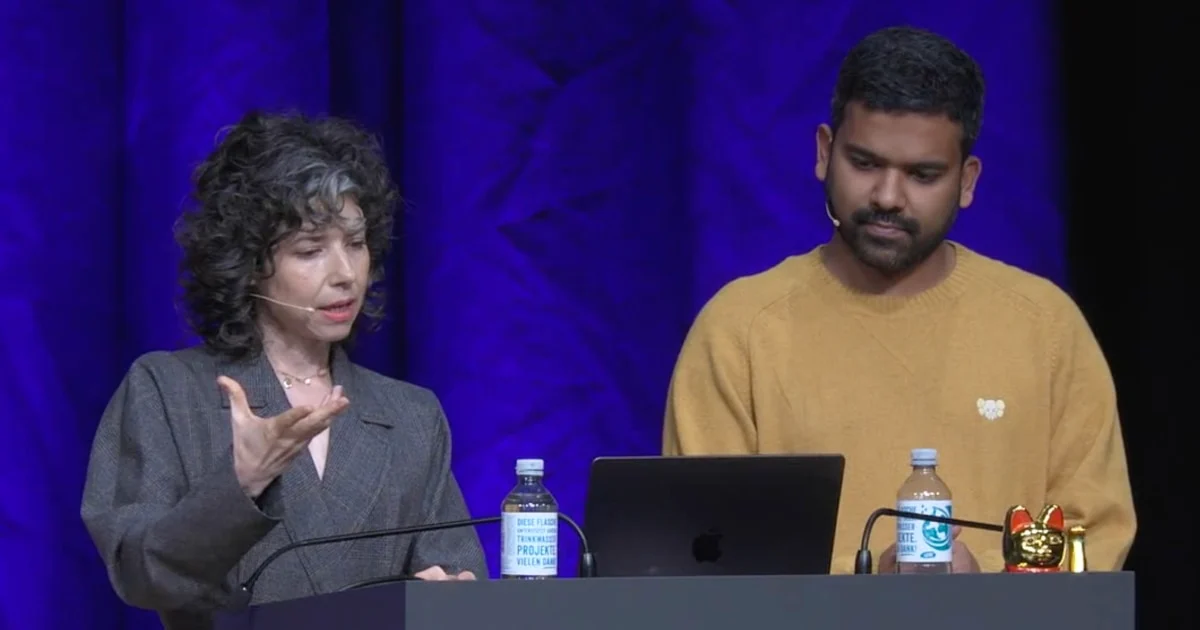

Signal leaders warn agentic AI is an insecure, unreliable surveillance risk

Signal President Meredith Whittaker and VP of Strategy & Global Affairs Udbhav Tiwari argue that “agentic AI” (tools that can take actions on your behalf) creates new privacy and security failure modes that are easy to hand-wave away until they become default OS features. Their core concern isn’t just model behavior—it’s the surrounding plumbing: to be useful, agents tend to accumulate broad, durable access to your accounts, messages, and files, creating a single place where malware and “prompt injection” can do outsized damage.

They point to examples like Microsoft’s Recall-style “activity timelines,” where the system captures and indexes your screen activity into a local database. Even if apps use end-to-end encryption, a compromised machine can leak the plain-text “before encryption” view. They also argue that reliability degrades rapidly when an agent chains many probabilistic steps together—so a seemingly “95% accurate” tool can still fail often once it’s asked to complete a multi-step workflow.

Their proposed mitigations are mostly governance and product defaults: slow down reckless deployment, make opting out the default rather than an afterthought, and require far more transparency/auditability around what data is collected, where it’s stored, and what code paths can access it.