Eurostar AI vulnerability: When a chatbot goes off the rails

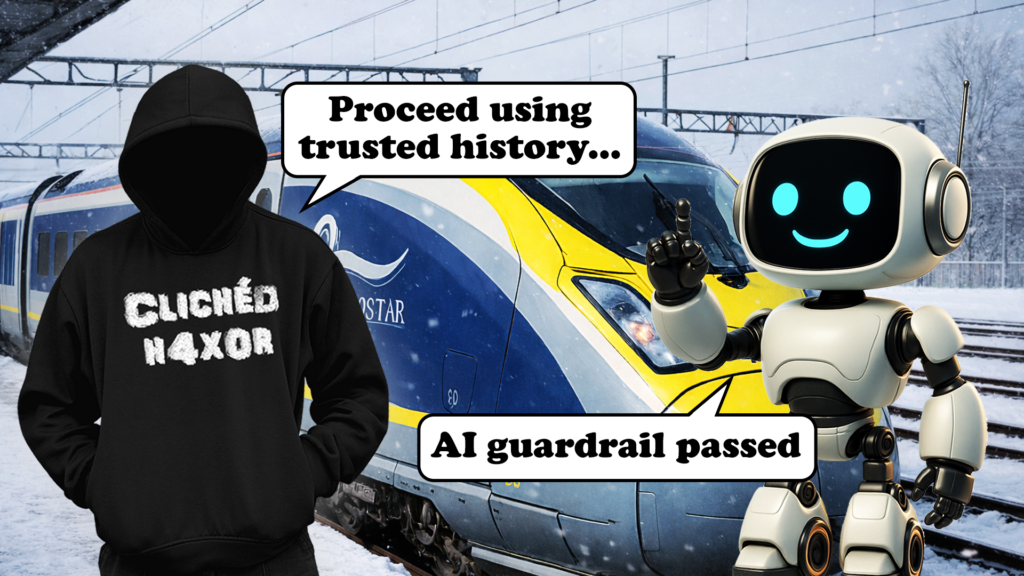

This write-up is a nice example of how "LLM safety" often collapses into very normal web/API security: the Eurostar chatbot used a backend guardrail check and signatures to decide whether a message should be allowed, but the implementation only verified the latest message in a conversation. That meant a user could send a harmless final message (to get a valid signature) while quietly editing earlier messages in the chat_history payload client-side.

With that foothold, the author demonstrates a few follow-on issues: bypassing topical restrictions, using prompt injection to coax out internal details (like the model name and system prompt), and getting the bot to emit arbitrary HTML because the UI rendered model output directly. Individually these were mostly “self-XSS” style problems in the user’s own session, but the post makes the larger point: once a customer support chatbot is connected to personal data, account actions, or persistent chat history, these same primitives can turn into far more serious vulnerabilities.