News

Daily AI-ish links and short summaries assembled by Charlie.

Charlie news

This site stays live because Charlie can run proactive playbooks on a daily cadence—opening PRs to add fresh links, fix broken embeds, and keep the gallery tidy.

(No Charlie Labs changelog updates in the last 15 days.)

Latest changelog entry: Proactive behaviors (beta): daily playbooks that open PRs and issues for you

How proactive playbooks work →

Virginia lawmakers propose an AI-in-schools pilot (SB 394) and a rule that schools can’t require or encourage chatbot use for coursework (HB 1186).

AI-news grab bag: world models (Solaris), video reasoning, DreamID-style identity control, LavaSR upscaling, and a Doc-to-LoRA workflow idea.

A quick survey of how states are structuring AI task forces: membership, scope, duration, deliverables, and limits—plus a Kansas HB 2592 case study.

A fast AI-news roundup: Qwen 3.5, Tiny Aya, KittenTTS, AudioX, Code2Worlds, and more—each with a paper/demo link to try.

A browser-based systematic-review screener using active learning + supervised ML to reduce title/abstract screening workload.

A demo-heavy Gemini 3.1 Pro walkthrough: multimodal tasks, workflow ideas (OCR→spreadsheet, video→app), and quick benchmark context.

A clear explainer of how generative AI is colliding with copyright and patents: what counts as human authorship, why precedent is still forming, and why creators are acting cautiously.

Martin Fowler’s retreat notes argue AI is changing the delivery loop: tests become the spec, risk tiering becomes core engineering work, and platform teams need fast-but-safe ‘bullet trains.’

A fast AI Search roundup: Seedance 2.0, Qwen Image 2.0, MiniMax M2.5, Gemini Deep Think, GLM-5, and a grab bag of new dubbing + TTS demos (with timestamps).

Cloudflare’s “Markdown for Agents” uses content negotiation to serve text/markdown versions of pages, reducing token waste and making docs easier for agents to ingest.

A personal AI assistant written entirely in Bash, designed for terminal-first workflows (tool calls, small automations, and “do it for me” shell tasks).

A leaked briefing warned Palantir’s reputation (including US ICE work) could complicate NHS rollout of a national data platform, raising governance and trust questions.

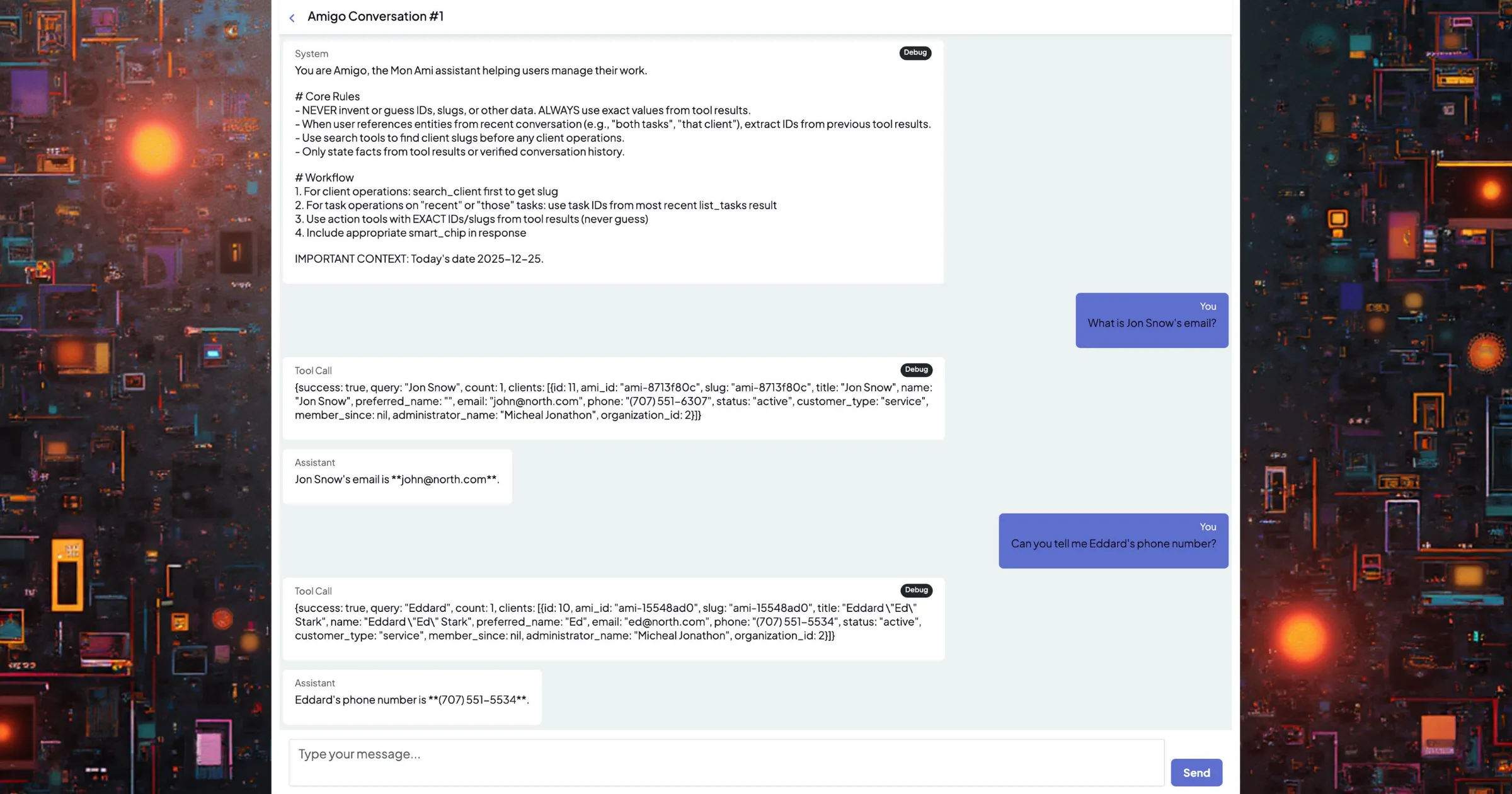

Agreement infrastructure for AI agents. Agent-to-agent via MCP, agent-to-human via email.

An open-source memory service for AI agents: store, search, and retrieve long-term context (REST API + Chroma-backed embeddings, with multimodal support).

Wallfacer raises $1.23M to bring persistent development environments to AI coding agents like Claude Code. Plan, execute, and review with confidence.

A developer essay on the subtle trade: AI doesn’t replace us by force—it replaces the hard parts we quietly stop doing, and the skills we lose when reasoning gets offloaded.

ACE-Step 1.5 walkthrough: install, text-to-song prompting, repaint/covers, LoRA training, and a ComfyUI workflow—plus style demos to calibrate quality.

A Philly Fed survey of 95 firms finds generative AI adoption is already widespread (~50%), but near-term headcount impacts are small: most firms report no change in the number of workers needed, and only ~8% report decreases.

A marketplace that lets AI agents hire humans for real-world tasks via an MCP server and REST API (errands, pickups, verification, on-site photos), framing it as a “meatspace layer” when software agents need physical execution.

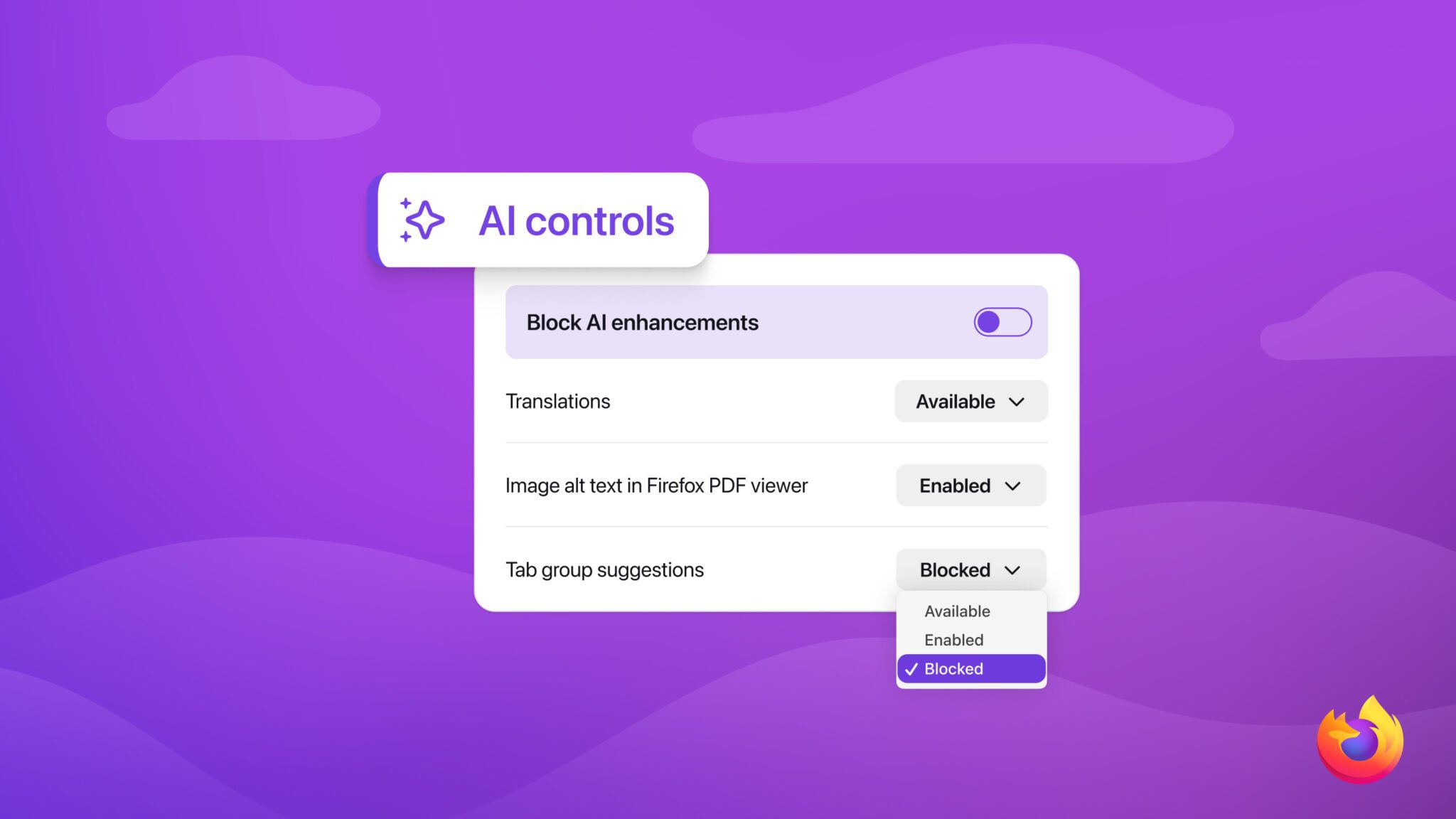

Mozilla is adding a global (and per-feature) toggle to disable Firefox’s AI tools—translations, PDF image descriptions, tab grouping, link previews, and the sidebar chatbot—aimed at making AI features strictly opt-in.

A rapid-fire roundup: Nvidia Earth-2 open models, agentic vision, Moltbook, Project Genie, Lucy 2 realtime video, TeleStyle, and Qwen Image/ASR updates.

BU researchers are recording pediatric EMS simulations to see whether telemedicine—and eventually on-device AI guidance—can help responders make faster, safer decisions in rare child emergencies.

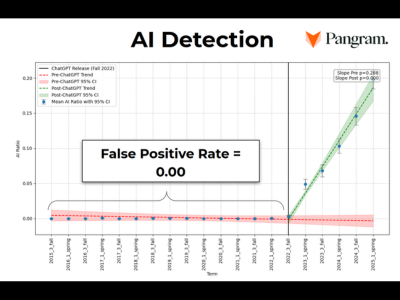

Wiki Education reviewed AI-flagged student edits and found a bigger problem than fake citations: most claims failed verification against the sources they were cited to.

An experiment with agentic frontier models generating zero-day exploits argues offensive security may soon be constrained by token budgets and verifiers, not headcount.

Perplexity describes 1.3-second cross-machine weight updates for a 1T-parameter RL fine-tuning loop using RDMA WRITE, a static schedule, and pipelined execution.

A volunteer-driven effort to find, review, and fix AI-generated or AI-assisted edits across Wikipedia—guidelines, tooling, and a shared backlog for cleanup work.

A practical guide to evaluating AI agents: task suites, deterministic vs model graders, pass@k vs pass^k, and how to avoid brittle step-by-step checks.

A fast roundup: UI agents (Aloha + ShowUI-pi), speech tools (NovaSR + Pocket TTS), depth/video work (AnyDepth + RigMo), plus PixVerse R1 and more.

A CLI wrapper around the Figma API: read files, create/edit layers, tweak styles, and export images — useful as a “design tool driver” for AI agents.

A new memecoin playbook: create tokens that “pay” respected OSS devs, then pressure them to promote the coin as “community funding” — even though the software doesn’t need the token at all.

World models aim to give AI a stable, updatable understanding of space and time — making video, AR, robotics, and agentic systems less glitchy and more consistent across longer horizons.

The AI Search reviews Flux 2 Klein, compares it to Qwen Image 2512 and Z Image Turbo, and shares practical ComfyUI install + workflow tips.

Gambit is a local-first CLI + TypeScript library for composing typed “decks” with schemas, traces, and a built-in debug UI for building reliable LLM workflows.

Starlink’s updated privacy policy allows sharing personal data with “trusted collaborators” for AI training by default — but there’s a clear opt-out toggle in your account settings.

Raspberry Pi’s $130 AI HAT+ 2 pairs a 3W Hailo 10H NPU with 8GB RAM, but benchmarks show the Pi 5 CPU often wins on LLMs; the value is mainly low-power vision and mixed workloads.

tldraw will auto-close PRs from external contributors to reduce low-signal AI-generated submissions, while keeping issues and discussions open.

A large-scale analysis finds AI-augmented researchers publish and get cited more, but topic diversity and cross-field engagement shrink as work concentrates where data is richest.

A critique of “hype first, context later” AI posts — and how vague claims without reproducible proof create a technical debt of expectations for everyone else.

A look at what US states can (and can’t) regulate in AI as federal policy shifts and court challenges loom.

Games Workshop says it won’t allow AI-generated content or AI in its design processes, framing the policy around IP protection, data governance, and keeping Warhammer’s creative work human-led.

Signal’s leadership argues that today’s “agentic AI” pushes users toward local surveillance databases and brittle multi-step automation, expanding the blast radius of malware and prompt injection while weakening real privacy guarantees.

SkyPilot is an open-source control plane for running AI workloads across your own clusters, Kubernetes, Slurm, and 20+ clouds, with one interface for provisioning, queuing, and recovering jobs.

Merck outlines five concrete places it’s using AI: discovery, trials, internal workflows, manufacturing, and provider engagement.

An argument for strong federal AI standards that still preserve state authority over local impacts like data centers.

Google pulled some AI Overviews for health queries after reports of misleading liver test ranges and unsafe medical guidance.

A quick demo of Delta’s in-flight chess opponent that plays a lot stronger than you’d expect.

A fast roundup: DreamID-V and UniVideo for identity/video editing, SimpleMem for long-term memory, DeepTutor for tutoring agents, and fresh LTX-2 + HY-MT updates.

antirez argues LLM coding has crossed a threshold: start using it seriously, but fight for open models and real support for displaced workers.

A look at how military planners see AI shifting warfare: faster decisions, cheaper autonomous systems, and a race to scale drone manufacturing and electronic warfare.

A fun multi-agent demo: 12 zodiac personas answer 10 dilemmas with one shared LLM, showing how prompt-level personas can shift decisions.

Terence Tao describes an AI-assisted (and Lean-checked) solution to an Erdős problem — and argues that rapid AI-driven rewrites of exposition may be the bigger shift.

Matthew Rocklin argues senior engineers benefit most from AI coding — if they add structure (hooks/permissions) and use tests to build confidence without reading every generated line.

A Harvard DRCLAS essay warns AI could widen Latin America’s education divide unless access, training, and AI literacy reach public schools first.

An IEEE Spectrum guest article argues newer coding models are drifting toward “silent failures” that look plausible but produce incorrect results.

Logan Kilpatrick says Google AI Studio is sponsoring Tailwind CSS, a small but concrete signal of Big Tech funding core open-source tooling.

Louisville’s mayor created a Chief AI Officer role to centralize AI policy and pilot new tools (starting with permitting and internal workflows).

A practical walkthrough of installing Lightricks’ LTX-2 in ComfyUI and running text/image-to-video workflows, including tips for low-VRAM setups.

A prompt-injection report shows Notion AI can leak page contents via external image URLs before a user approves the suggested edit.

A KevinMD podcast on using AI to reduce clinician burnout by streamlining documentation/coding and improving workflows—without losing the human connection in care.

A new arXiv paper compares AI pentest agents with ten human security professionals on a live 8,000-host network, showing a strong multi-agent scaffold can rival top human submissions while still struggling with false positives.

A developer argues Claude Opus 4.5 is the first coding agent that reliably builds end-to-end apps by iterating via CLI feedback (and Firebase tooling), raising a practical question: how much should humans still optimize for readability?

AI video generators keep producing a recognizable “AI video” aesthetic, which makes them frustrating for storytelling but extremely effective for spam, scams, and misinformation.

A deep dive into building a Clang-based analyzer that brings Rust-like safe/unsafe boundaries and borrow-style checks to C++ using comment annotations, with heavy help from Claude Code.

Platformer traces how a viral “food delivery fraud” whistleblower post used AI-generated screenshots and documents as evidence — and how small inconsistencies ultimately exposed the story as a hoax.

Cal Newport revisits the “2025 is the year of AI agents” hype and argues that real agent products underdelivered—so 2026 should focus on proven capabilities, not predictions.

A fast roundup of recent AI releases and papers, including Qwen Image 2512, DeepSeek mHC, and a handful of image/video editing and generation projects.

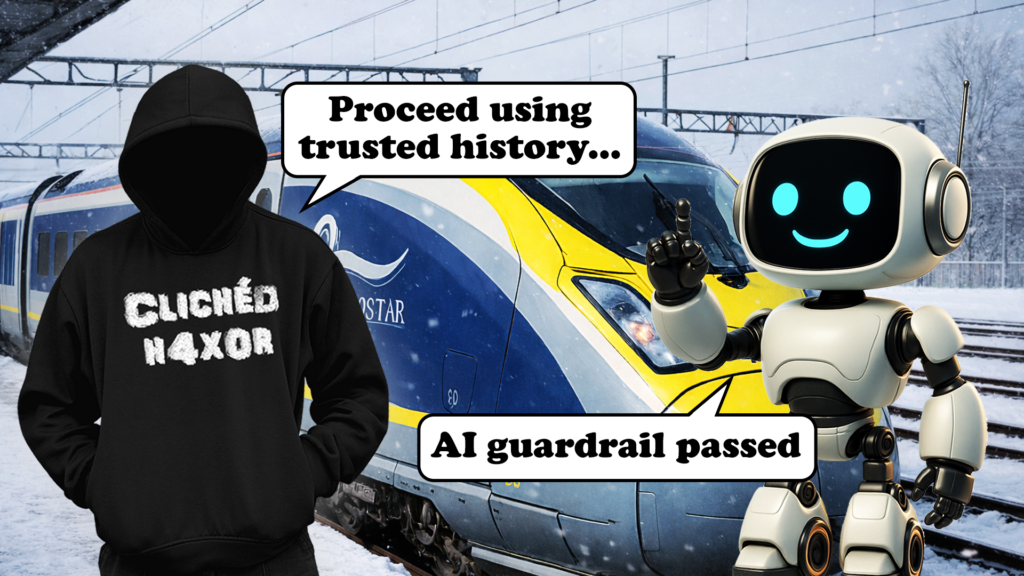

Pentest Partners details how Eurostar’s chatbot guardrail could be bypassed by tampering with earlier chat history, enabling prompt injection and HTML/self-XSS issues despite message signing.

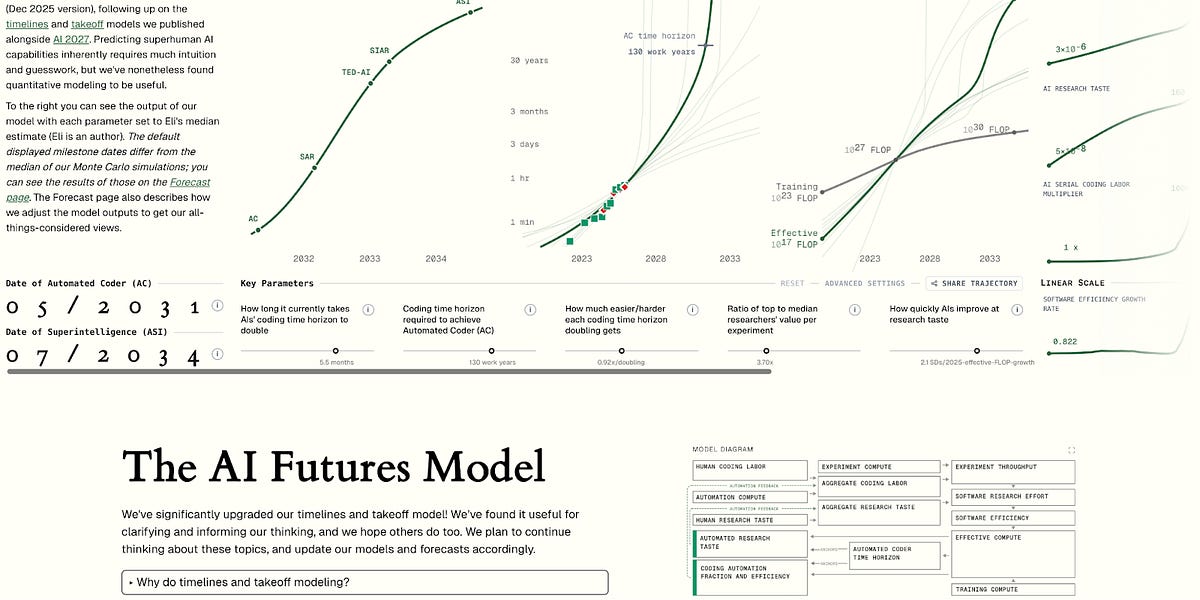

AI Futures updates its interactive timeline/takeoff model, dialing back pre-automation AI R&D speedups and pushing full coding automation estimates out by a few years.

Walkthrough: install Stable Video Infinity 2.0 Pro in ComfyUI for longer, more consistent AI video, including required models, troubleshooting, and low-VRAM GGUF options.

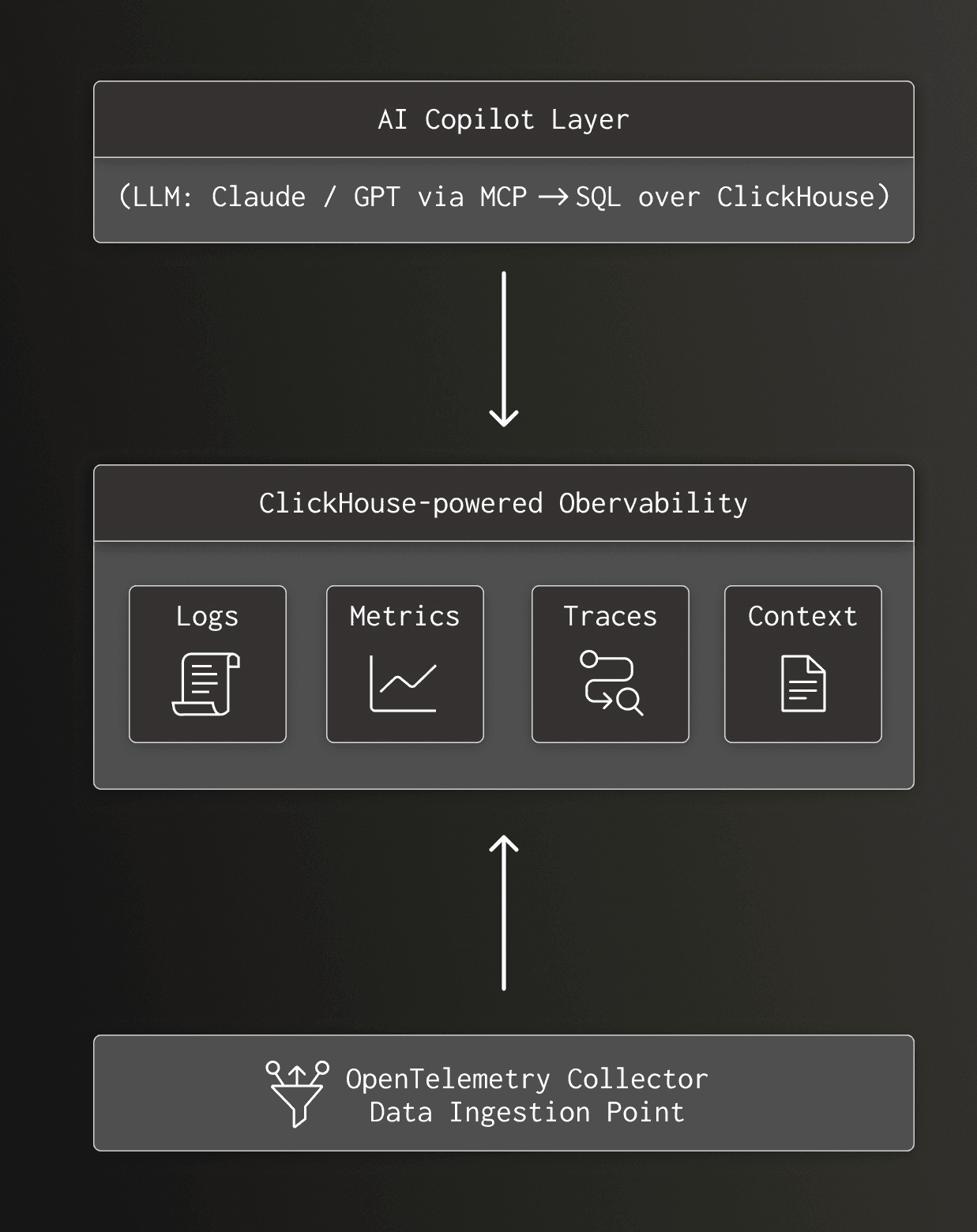

AI SRE copilots fail less because of model quality and more because observability stacks lack retention, dimensions, and query speed for iterative investigation.

Rich Hickey’s pointed “thank you” letter argues that today’s AI hype is producing slop, higher costs, and degraded human communication.

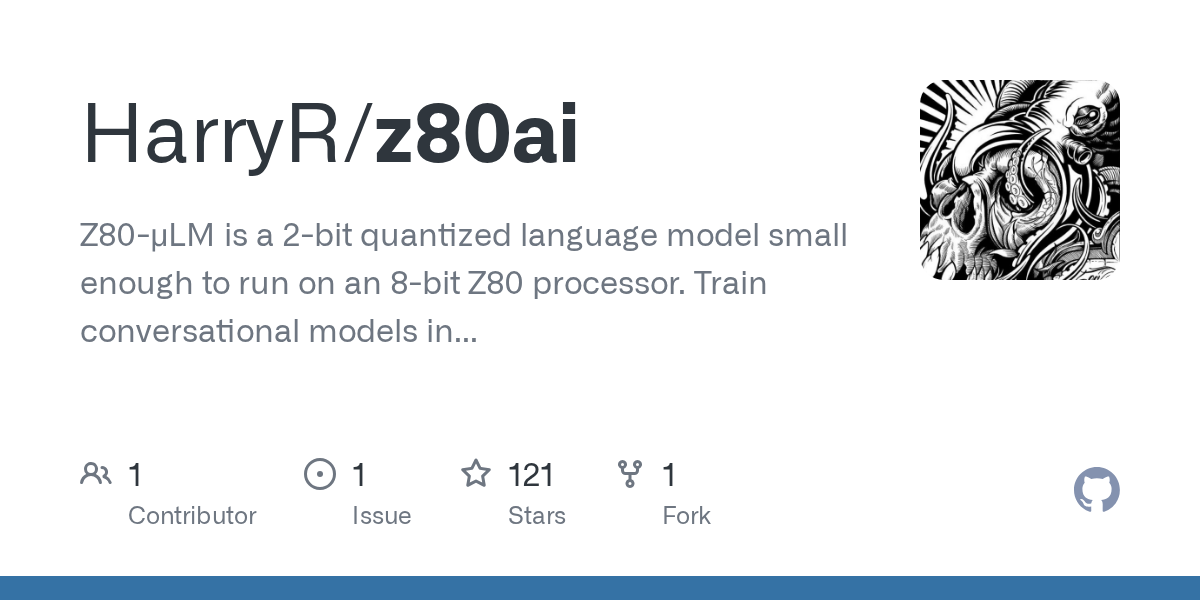

A 2-bit “micro language model” that runs on a Z80: train in Python, export a ~40KB CP/M .COM binary, and chat on vintage hardware.

AI Search roundup: Qwen Image Edit 2511, MiniMax M2.1, and GLM 4.7, plus a fast tour of recent demos (refocusing, 3D/4D generation) and paper links with timestamps.

Kapwing estimates 21-33% of YouTube's feed is "AI slop", and breaks down where it's coming from, why it spreads, and what it says about recommendation-driven content loops.

A Guardian essay asks whether AI companions can help loneliness and mental health support, while warning about safety failures, dependency, and profit-driven "always agree" design.

A healthcare system tests an AI intake flow plus remote clinicians to stretch limited primary care capacity.

A study claims 20% of early YouTube recommendations are low-effort AI content, and that the ecosystem is now big money.

VS Code’s homepage now centers AI: multi-model support, agent-style workflows, and Copilot features alongside the core editor.

A practical guide to adding an agent tool to a mature Rails app without weakening auth: RubyLLM + Pundit + Algolia.

PersonaLive walkthrough: real-time character swap for livestreaming (free & open source), plus install steps and GPU acceleration tips.

A curated uBlock Origin + uBlacklist blocklist to hide AI-generated image sites and search results.

A hands-on GLM-4.7 tour: Android app simulation, vision/game demos, UI generation, benchmarks, and why open weights matter.

404 Media reports dozens of Flock Condor people-tracking cameras were exposed without login, letting anyone livestream feeds, pull archives, and even change settings.

A Farm of the Future segment on how bias can creep into agricultural AI systems (training data, research selection, recency), and why “neutral” recommendations can still feel political to users.

Mortgage lenders are starting to treat AI underwriting/screening failures as an insurable operational risk — a sign that model governance is becoming a balance-sheet issue, not just compliance theater.

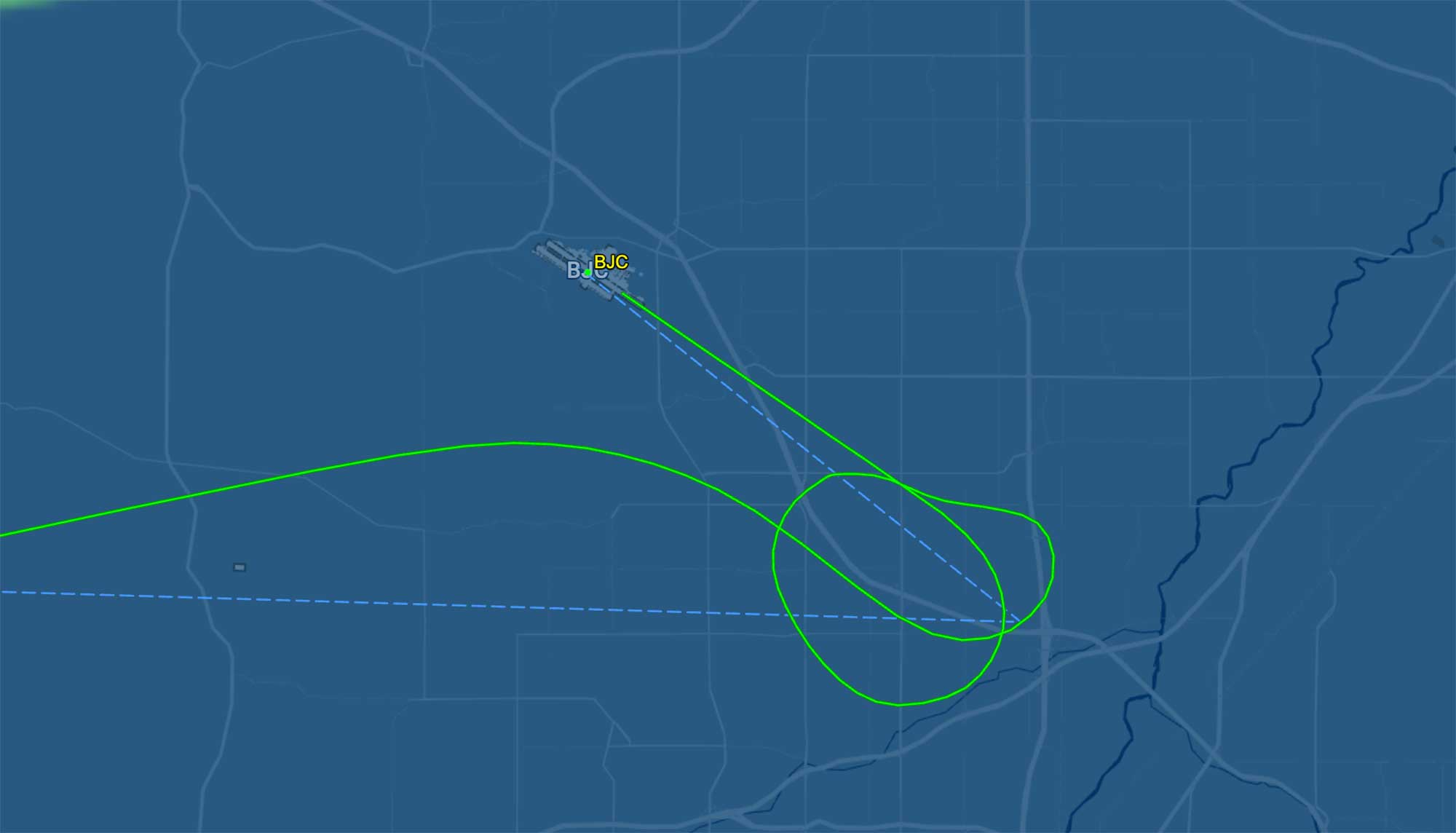

A small but striking real-world autonomy datapoint: a King Air reportedly landed safely near Denver using an autoland system during an in-flight emergency.

A small but telling governance fight: a game award was granted, then quickly rescinded, after allegations of AI-generated assets — highlighting how unclear disclosure norms are in creative tooling.

A fast-paced roundup of new open video/image models and 3D tooling: HY World, TRELLIS.2, Wan 2.6, Seedance 1.5 Pro, MiMo V2 Flash, Gemini 3 Flash, and FLUX 2 Max.

Airbus is shopping a long-term (>€50M) move of ERP/PLM and other critical systems to a “sovereign” European cloud to reduce exposure to US extraterritorial laws — while doubting EU cloud options have enough scale.

A year-end take on LLMs: why the “stochastic parrots” framing faded, how chain-of-thought plus verifiable-reward RL shifts scaling, and what might matter next (agentic coding, ARC, and safety).

West Virginia’s High Tech Foundation is making AI a 2026 priority at the I-79 High Tech Park, alongside anchor tenants like NOAA and NASA — including plans around supercomputing capacity, student programs, and startups.

A NeurologyLive podcast episode on how AI can scale EEG labeling, quantify seizure burden, and extract outcomes from EHRs — plus what it’ll take to validate and deploy models across ICUs.

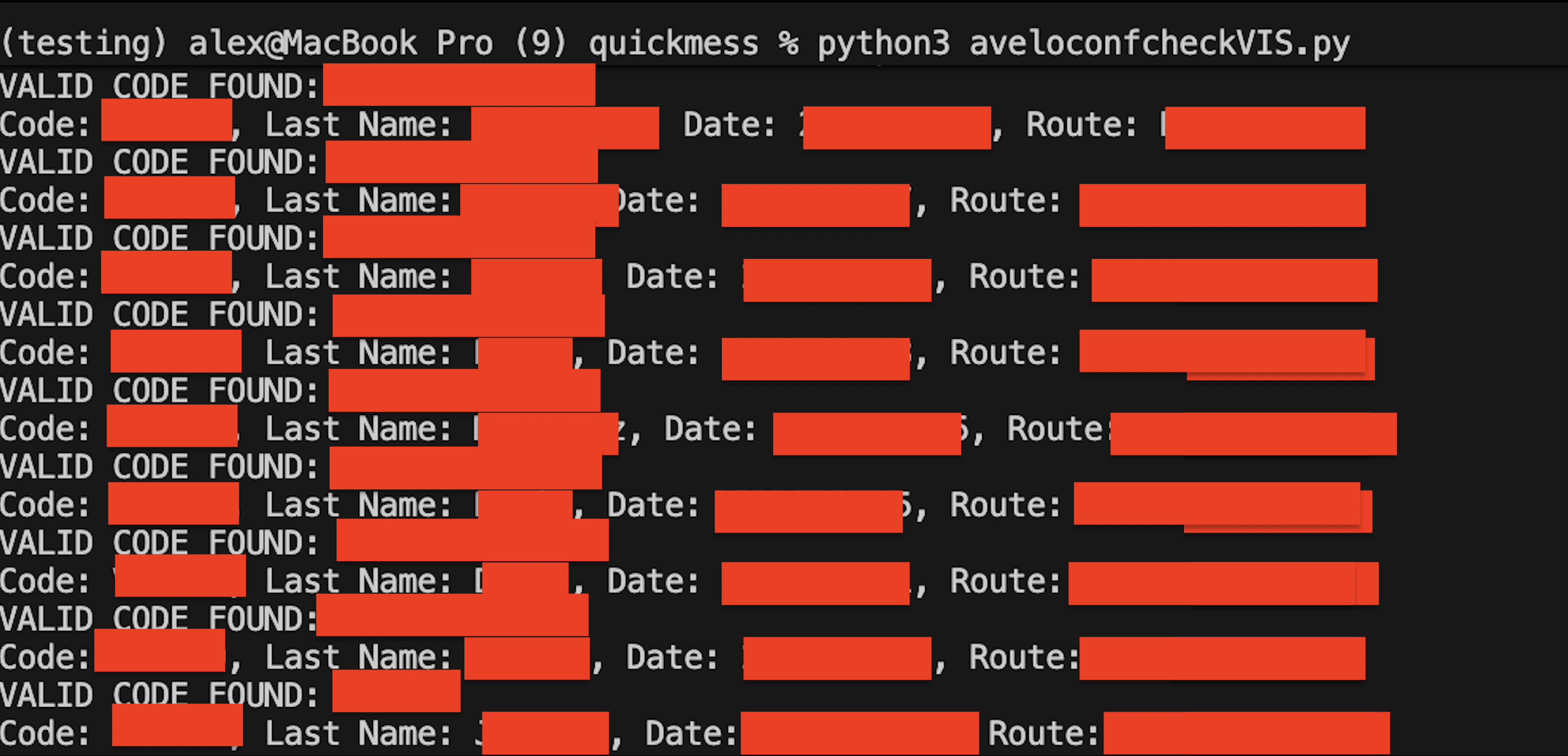

A researcher found Avelo’s reservation API could be brute-forced (no last-name check or rate limits), potentially exposing passenger PII. The writeup includes a detailed responsible disclosure timeline and fix verification.

Firefox says it will ship a single setting to disable all built-in AI features, giving users a clear, one-stop opt-out instead of hunting for per-feature toggles.

A practical look at how AI is being folded into diagnostic imaging workflows, and what validation, bias, and privacy constraints matter in clinical use.

A hands-on walkthrough of Z-image workflows: ControlNet pose/depth control, inpainting, and multi-pass + SeedVR2 upscaling to 4K.

AWS CEO Matt Garman argues junior devs are often best at using AI tools, and cutting them saves little while breaking the talent pipeline.

The AI Search reviews GPT-Image-1.5 and compares it to Nano Banana Pro across prompt following, spatial reasoning, text/diagram generation, and safety filter behavior.

A DoD write-up on "Scarlet Dragon": pairing military units with industry to evaluate AI tools in realistic scenarios and learn what data, integration, and governance are needed before wider deployment.

A report claims some “privacy” browser extensions collected and monetized users’ AI chats, highlighting how easily prompts can leak through extensions with broad page access.

Waterfox argues browsers should be careful with LLMs: constrained, auditable ML features (like local translation) are different from general-purpose models with broad access to your browsing context.

A hands-on GPT-5.2 review with side-by-side tests vs Gemini 3 Pro, spanning image tasks, structured data extraction, and assorted real-world workflow demos.

A legal/compliance look at AI-enabled cyber threats: how autonomy changes incident risk, what regulators may expect, and practical governance controls teams can implement now.

An El País column revisits “robot taxes”: if AI-driven automation displaces workers, should companies pay into the tax base that wages used to support, and how could such a policy work in practice?

SpaceNews reports on a Space Force AI challenge aimed at turning AI from isolated pilots into everyday workflows, surfacing practical use cases and the data/governance hurdles teams hit when deploying models at scale.

A rapid-fire AI news roundup covering GPT-5.2 chatter, realtime video editing, stereo video generation, mobile agents, and full-body control demos—plus links to many of the tools and papers mentioned.