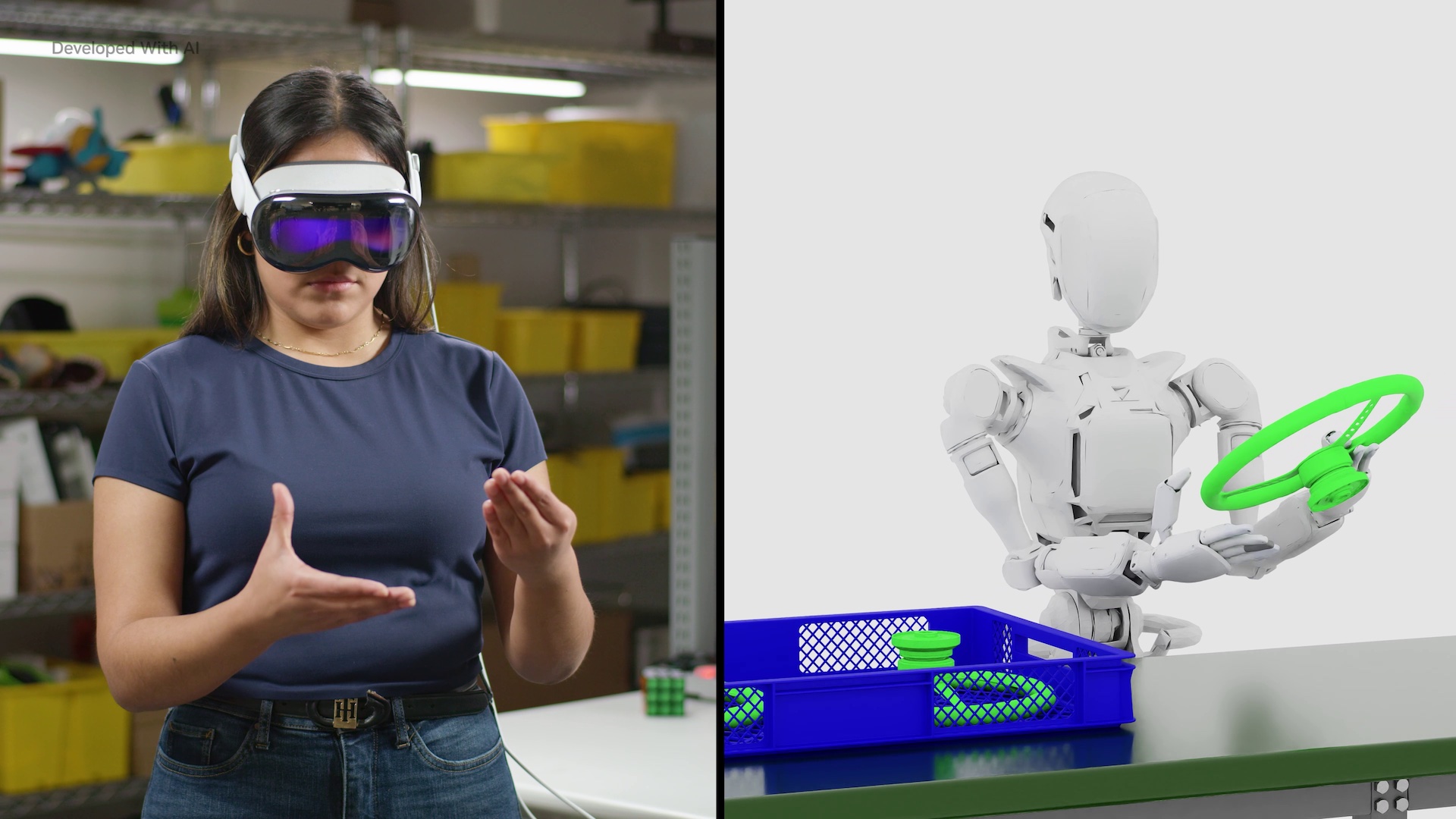

Synthetic Manipulation Motion Generation for Robotics

This Isaac GR00T blueprint is a reference workflow for turning a small number of human demonstrations into a much larger synthetic dataset of robot manipulation trajectories. The core idea is straightforward: instead of collecting thousands of real-world rollouts, you use simulation and generative augmentation to create motion variants that cover more edge cases than your original demos.

The implementation is packaged as a Docker-deployed Jupyter workflow built on NVIDIA Omniverse / Isaac tooling, with an explicit callout that Cosmos runs best on a separate, beefier node. The published prerequisites are not “toy” specs (Ubuntu 22.04, NVIDIA GPU, plus a separate H100-class GPU for Cosmos), which is a good signal that this is aimed at teams doing serious robotics dataset generation rather than quick demos.

What to try first: run the notebook end-to-end once with the provided sample assets to see what the generated trajectories look like and where the workflow spends time (simulation vs. generation vs. post-processing). After that, the first useful customization is usually swapping in your own robot/task assets and tightening the success criteria so your synthetic data doesn’t drift away from the behaviors you actually want.

Source listing: https://build.nvidia.com/blueprints?filters=publisher%3Anvidia